I know the practice is frown upon, but I did some vibe coding recently for the first time.

I don’t know how to code. I understand some basics, like the “if this do that, else do something else” or the concept of using a database to store and retrieve data, but when it comes to write code, I don’t know how to do it.

Even with this limitation, over the years I have done a few things PHP. It often involves many attempts and using existing code as a base, but it has been enough to create basic WordPress themes (like the one I’m currently using here) and add small features. I have a page that allows me to upload files when I want to share them online. And I’ve also been using bash scripts and crons to do tasks ranging from backups to other maintenance tasks.

With the exception of the WordPress themes, nothing is exposed to the internet and I’m also the only person using these tools, so I don’t have to worry about the code not being super secure or have to account for every edge case. That’s why I’m confident enough to do this.

Recently, I was looking at my upload page and I wasn’t happy with it. It didn’t have a dark theme, the code could handle name collisions, but not in a smart way, and file name sanitisation worked, but wasn’t perfect (e.g.: if a file name had two spaces, it would add two “_” or remove characters like “à” instead of converting them to “a”). It would be nice if I could improve it and since people have been using “AI”/LLMs to code, I decided to give it a try and learn more about it in the process.

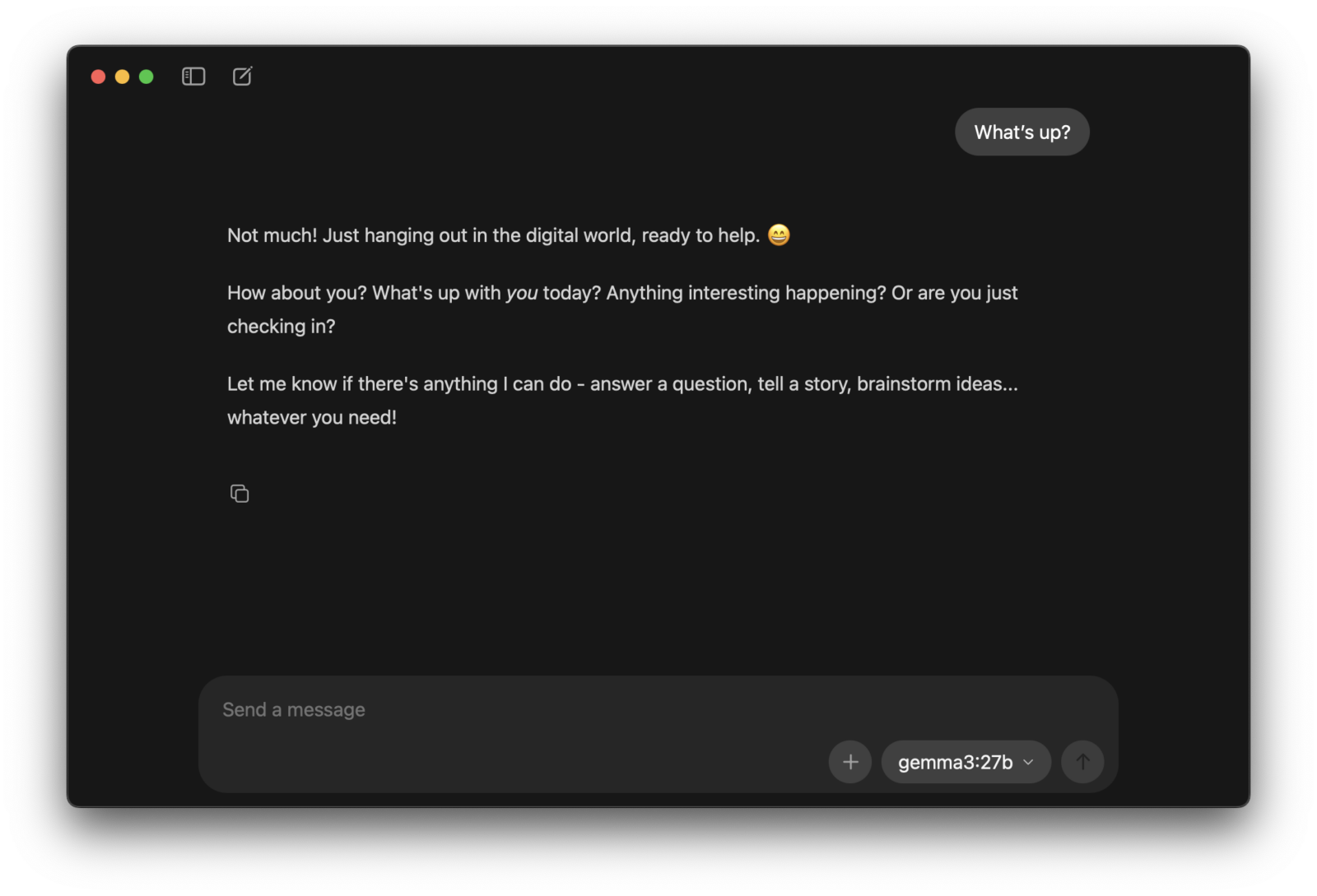

Ollama and the File Uploader

I have a fairly powerful machine – a Macbook Pro with the M4 Max and 48GB of memory – which can run some models locally at acceptable speeds. I’m a noob, so a while back I installed Ollama, a free program that makes it simple to download a model and then have a chat with it. I like the idea of being able to run these locally. I had used the smaller deepseek-r1:8b and gemma3:27b models to translate text locally before, but they don’t seem to be that good for coding, so after a few searches I ended up using qwen3-coder:30b.

The new page is now ready, so I can say that I managed to do what I wanted to, but it was a learning process with a lot of back and forth, removing and re-adding features, improving my instructions so they didn’t have different meanings, being caught in “loops” and having to use more powerful models.

The first thing I’ve learned is that with qwen3-coder:30b, it worked much better if I gave it one or two instructions, instead of many as it would often forget to do it all. It would also make unrelated changes, like adding paragraphs of text or making styling changes, which forced me to add “do not change the styling or other features” (or something along those lines). Still, step by step and some instructions to verify something I thought it was wrong or not working well, to clean up unused code or styling, to simplify code, etc, I managed to get to a point where I had a page that allowed me to upload files, open or copy a link, delete them, convert images to WebP, a very good file name sanitisation, a good way to handle files with the same name by comparing checksums and using sub-folders to avoid renaming the file.

Eventually I reached a sort of ceiling. I wanted to have the option to have the files being saved by the Internet Archive’s Wayback Machine and have them automatically uploaded to Virus Total for scanning. While it did a basic implementation of both, it couldn’t do it in a way that didn’t slow down uploads a lot and we were not going anywhere. That’s when I paid Ollama $20 so I could run the larger qwen3-coder:480b in the cloud, which immediately could find more issues with the code, made other suggestions, and ended up moving the really slow Wayback Machine saving to a background task (after I told it to). I ended up with a page that:

- Supports multi file upload with drag and drop.

- Allows me to upload files larger than the limit set by the server or CDN.

- Allows me to convert images to WebP with a single click.

- Good file name sanitisation.

- Doesn’t change the file name if one already exists. Also compares checksums to avoid duplication.

- Optional (and very basic) Virus Total (via API) and Wayback Machine integration.

- Good light and dark mode support.

- A nice “configuration” section in the code where I can set the WebP quality, chunk size for large uploads, enable or disable Virus Total and Wayback Machine.

While it’s not perfect (more at the end of the post), it works well enough for me. After 3 days working on and off on this, using the page, fixing different bugs and making improvements, here’s the page in action:

The XML sitemap generator

After doing this, I decided to ask it to create a bash script to scan a server directory and then create a sitemap. This is something that I’ve been meaning to do for a while on a site that is essentially static HTML and has many sub-directories.

This one was was really simple. It did a good job in the first attempt, but I still had to ask it to include the top level directory and to add different features which I hadn’t thought about, like excluding some areas of the site, manually including some URLs, and removing the <lastmod> date, which it was getting from the “last modified” date and didn’t make sense to use it in this case.

The Virus Total upload page

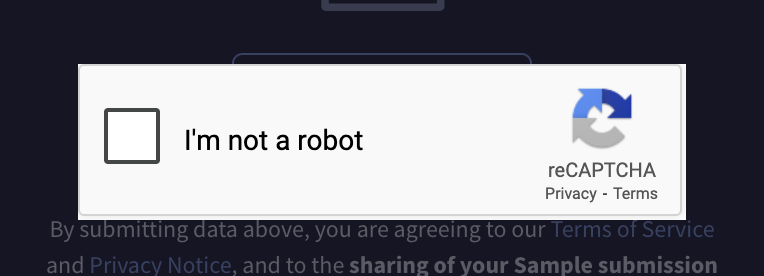

I use Virus Total a few times a week. It’s a nice tool, but uploading files has become a pain in the ass because of captchas. I don’t know if it’s the IP, some privacy option or extension or the fact that I use Firefox, but it annoys me. A lot.

I knew that I could upload files via their API as I done something like that on my file upload page and after having to select another two pages of stairs, I decided to create another upload page, but this time just for Virus Total.

Since this was going to be a bit more complex with the need for a queue system due to VT’s 4 submissions per minute limit, I started with the larger qwen3-coder:480b using the cloud servers. I don’t know if it was my prompts that were not very good, but it struggled more with this task. It was able to create a basic front end right away and to come up with a way to create a queue that wouldn’t disappear if the page was closed, but nothing worked right away. I managed to implement some of the same features, like uploads in smaller segments to be able to use VT’s 650MB file limit, but I couldn’t make the queue work, larger files would fail because it couldn’t make the large upload endpoint work, it couldn’t even generate a link to the results page correctly…

This was a bit weird as I had some of the features working on the upload page, but in this case and probably partially due to my prompts, I couldn’t get it to work well. We were both stuck.

Gemini to the rescue

I decided to try to other open models, but they were either too slow or couldn’t fix the issues either, so I remembered that the Ollama’s Pro plan I had also included some “premium requests” to Google’s “Gemini 3 Pro Preview”.

I asked it to look at the code, see why larger uploads were failing and find other issues with the code. It was much better. After ~15 messages, I had everything working and also had other features implemented. It still required some nudging and pointing out small issues, and it also made a wrong assumption about VT’s API (which it noticed after I pointed out that the uploads still failed), but we got there. One significant difference compared to qwen3-coder is that I could give it different tasks and it would actually follow them, so more can could be done with each reply.

And so, now I have a Virus Total upload page that:

- Allows me to upload multiple files at once without seeing a single captcha.

- Uploads up to 650MB even though my server doesn’t do files larger than 100MB.

- Has a queue to handle the 4 submissions per minute API limit and other errors.

- Gives me a link to the scan page.

I could have added more features, like displaying the number of detections or have a scan history, but I don’t need that. I wanted to bypass the captchas and it does that job well.

Thoughts on this experience

While I wasn’t completely unfamiliar with “AI” and LLMs, I don’t really use them. I just don’t need most of what they provide. As long search results are good, regular search still works well for me and with less hallucinations (not to mention that most sites need visits to keep running). I rarely have the need summarize stuff or have to deal with large quantities of information. I also don’t have much use for video, image or audio creation and also avoid AI-created media content. AI features on my phone are disabled. I do translate content, but it’s usually webpages and the browser (Firefox) handles that (it runs locally, but I’m not sure if it falls under the “AI” umbrella).

Long story short, this was the first time I used LLMs for something really useful and while not the smoothest experience, I think it’s a great tool to have. Like any tool, you probably don’t want to use it for everything or all the time, but it did allow me to do something I couldn’t do before and that was nice.

In hindsight, since I decided to pay $20 to run a larger model on the cloud and ended up using a paid model like Gemini 3 to finish the most complex task, I could have taken those same $20 and pay for Google One or Anthropic’s Claude, which seems to be popular for coding, and perhaps I would get everything done in less time and with less work.

In any case, I do like the idea of being able to do this locally, without relying on a service or sending data to 3rd parties. There are efficient models, like Google’s gemma3, which can do translations and summaries pretty well without using too many resources. While probably not a huge task, the upload page was mostly created with qwen3-coder:30b running locally. I decided to use qwen3-coder:480b and the cloud because I didn’t want to start implementing some features from scratch with different instructions to get a different result, but with time and patience, I probably could have done it.

Something to try in the future is doing this directly in a code editor. During this test, I was copying the code from the “chat” window and saving it to the file. It was not ideal.

Where’s the code?

Firstly, don’t expose this to the internet and don’t trust the code to work, to work well or to work at all. It works well enough for me, but it might not work for you.

For the Virus Total page, here’s the code. The read the “read-me.txt” and set your API in the code:

The file upload page I won’t share because it saves different files to different directories, which only I need it. The Virus Total and Wayback Machine implementations are also very rudimentary, with Virus Total locking the upload completion until it can generate an URL or until a 10s timeout cancels that. The Wayback Machine makes a curl request in the background to the /save/<url-here> endpoint and stops running after 30 seconds, independently of the URL being saved or not. The user isn’t informed of a failure in either case, which is fine in my case as they’re only “nice to have” features that can fail, but you can’t rely on them. It’s too customized for my needs and too incomplete.

Comments